SenseVoicecpp ggml-cann.cpp大模型[AI人工智能(七十六)]—东方仙盟

我们坚信,每一份原创智慧都值得被尊重与回馈,以永久分成锚定共创初心,让创意者长期享有价值红利,携手万千伙伴向着科技星辰大海笃定前行,拥抱硅基生命与数字智能交融的未来,共筑跨越时代的数字文明共同体。在智能时代的浪潮里,单打独斗的发展模式早已落幕,唯有开放连接、创意共创、利益共享,才能让个体价值汇聚成生态合力,让技术与创意双向奔赴,实现平台与伙伴的快速成长、共赢致远。,负责把 ggml 的张量计算指令

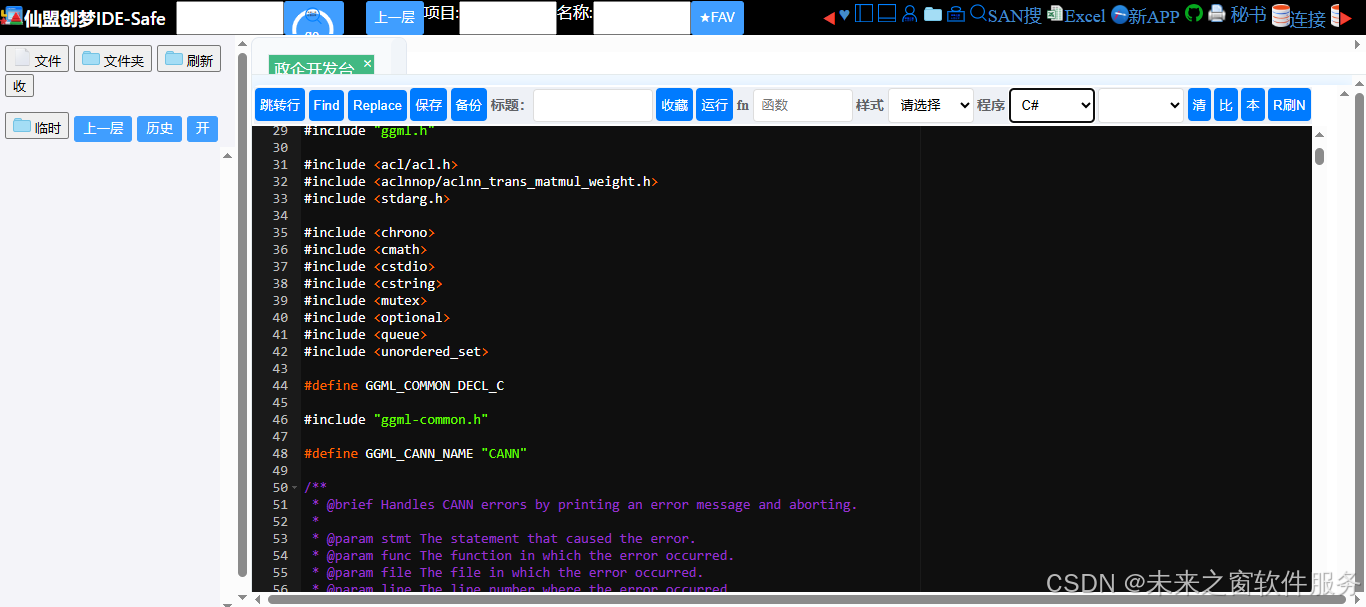

ggml-cann.cpp

核心代码

完整代码

/*

* Copyright (c) 2023-2026 The ggml authors

*

* Permission is hereby granted, free of charge, to any person obtaining a copy

* of this software and associated documentation files (the "Software"), to

* deal in the Software without restriction, including without limitation the

* rights to use, copy, modify, merge, publish, distribute, sublicense, and/or

* sell copies of the Software, and to permit persons to whom the Software is

* furnished to do so, subject to the following conditions:

*

* The above copyright notice and this permission notice shall be included in

* all copies or substantial portions of the Software.

*

* THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

* IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

* FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

* AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

* LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING

* FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS

* IN THE SOFTWARE.

*/

#include "ggml-cann.h"

#include "ggml-backend-impl.h"

#include "ggml-cann/aclnn_ops.h"

#include "ggml-cann/common.h"

#include "ggml-impl.h"

#include "ggml.h"

#include <acl/acl.h>

#include <aclnnop/aclnn_trans_matmul_weight.h>

#include <stdarg.h>

#include <chrono>

#include <cmath>

#include <cstdio>

#include <cstring>

#include <mutex>

#include <optional>

#include <queue>

#include <unordered_set>

#define GGML_COMMON_DECL_C

#include "ggml-common.h"

#define GGML_CANN_NAME "CANN"

/**

* @brief Handles CANN errors by printing an error message and aborting.

*

* @param stmt The statement that caused the error.

* @param func The function in which the error occurred.

* @param file The file in which the error occurred.

* @param line The line number where the error occurred.

* @param msg The error message.

*/

[[noreturn]] void ggml_cann_error(const char * stmt, const char * func, const char * file, int line, const char * msg) {

int32_t id = -1;

aclrtGetDevice(&id);

GGML_LOG_ERROR("CANN error: %s\n", msg);

GGML_LOG_ERROR(" current device: %d, in function %s at %s:%d\n", id, func, file, line);

GGML_LOG_ERROR(" %s\n", stmt);

// abort with GGML_ASSERT to get a stack trace

GGML_ABORT("CANN error");

}

// Thread-local variable to record the current device of this thread.

thread_local int g_current_cann_device = -1;

/**

* @brief Set the CANN device to be used.

*

* @param device The target device ID to set.

*/

void ggml_cann_set_device(const int32_t device) {

// int current_device = -1;

// Note: In some CANN versions, if no device has been set yet,

// aclrtGetDevice(¤t_device) may return 0 by default.

// aclrtGetDevice(¤t_device);

// If the current device is already the target one, no need to switch.

if (device == g_current_cann_device) {

return;

}

// Switch to the new device.

ACL_CHECK(aclrtSetDevice(device));

// Update the global device record.

g_current_cann_device = device;

}

/**

* @brief Get the value of the specified environment variable (name) as lowercase.

* if not empty, return a std::string object

*/

std::optional<std::string> get_env_as_lowercase(const std::string & name) {

const char * val = std::getenv(name.c_str());

if (!val) {

return std::nullopt;

}

std::string res = std::string(val);

std::transform(res.begin(), res.end(), res.begin(), ::tolower);

return res;

}

/**

* @brief Verify whether the environment variable is a valid value.

*/

bool parse_bool(const std::string & value) {

static const std::unordered_set<std::string> valid_values = { "on", "1", "yes", "y", "enable", "true" };

return valid_values.find(value) != valid_values.end();

}

/**

* @brief Parse a string as an integer, returning 0 if invalid.

*

* This function attempts to convert the input string `value` to an `int`.

* If the string is not a valid integer or is out of the `int` range,

* it returns 0.

*

* @param value The string to parse.

* @return The parsed integer, or 0 if conversion fails.

*/

int parse_integer(const std::string & value) {

try {

return std::stoi(value);

} catch (...) {

return 0;

}

}

/**

* @brief Initialize the CANN device information.

*

* This function initializes the CANN device information by obtaining the

* device count and setting the memory allocation granularity for each device.

*

* @return A structure containing the device information.

*/

static ggml_cann_device_info ggml_cann_init() {

ggml_cann_device_info info = {};

aclError err = aclrtGetDeviceCount((uint32_t *) &info.device_count);

if (err != ACL_SUCCESS) {

GGML_LOG_ERROR("%s: failed to initialize CANN: %s\n", __func__, aclGetRecentErrMsg());

return info;

}

GGML_ASSERT(info.device_count <= GGML_CANN_MAX_DEVICES);

for (int id = 0; id < info.device_count; ++id) {

aclrtPhysicalMemProp prop = {};

prop.handleType = ACL_MEM_HANDLE_TYPE_NONE;

prop.allocationType = ACL_MEM_ALLOCATION_TYPE_PINNED;

prop.memAttr = ACL_HBM_MEM_HUGE;

prop.location.type = ACL_MEM_LOCATION_TYPE_DEVICE;

prop.location.id = id;

prop.reserve = 0;

err = aclrtMemGetAllocationGranularity(&prop, ACL_RT_MEM_ALLOC_GRANULARITY_RECOMMENDED,

&info.devices[id].vmm_granularity);

info.devices[id].vmm = err == ACL_SUCCESS;

size_t free, total;

ggml_backend_cann_get_device_memory(id, &free, &total);

info.devices[id].total_vram = free;

}

// TODO: add more device info later.

return info;

}

/**

* @brief Retrieve the CANN device information.

*

* This function returns a reference to a structure containing the CANN device

* information. The device information is initialized once and reused on

* subsequent calls.

*

* @return A reference to the structure containing the device information.

*/

const ggml_cann_device_info & ggml_cann_info() {

static ggml_cann_device_info info = ggml_cann_init();

return info;

}

//#define DEBUG_CANN_MALLOC

/**

* @brief A pool of CANN buffers(priority segment buffer).

*

* This class manages a pool of CANN buffers for a specific device.

*/

struct ggml_cann_pool_buf_prio : public ggml_cann_pool {

/**

* @brief The maximum reuse margin for a buffer.

*/

static const size_t max_reuse_margin = 1ull << 22; // 4MB

/**

* @brief The minimum free margin for a buffer.

*/

static const size_t min_free_margin = 1ull << 20; // 1MB

/**

* @brief The alignment for buffer allocation.

*/

static const size_t alignment = 128;

/**

* @brief The device ID associated with this buffer pool.

*/

int device;

/**

* @brief Whether to disable clean during buffer allocation.

*/

bool disable_clean = false;

/**

* @brief Structure representing a CANN buffer.

*/

struct ggml_cann_buffer {

void * ptr = nullptr; ///< Pointer to the buffer.

size_t size = 0; ///< Size of the buffer.

std::chrono::steady_clock::time_point last_used; ///< Last used time.

bool operator>(const ggml_cann_buffer & other) const { return size > other.size; }

};

/**

* @brief Array of CANN buffers in the pool.

*/

std::unordered_map<void *, size_t> buffer_pool;

std::priority_queue<ggml_cann_buffer, std::vector<ggml_cann_buffer>, std::greater<>> free_buffers;

/**

* @brief Total size of all buffers in the pool.

*/

size_t pool_size = 0;

/**

* @brief Constructor to initialize the buffer pool for a specific device.

*

* @param device The device ID to associate with this buffer pool.

*/

explicit ggml_cann_pool_buf_prio(int device) : device(device) {

disable_clean = parse_bool(get_env_as_lowercase("GGML_CANN_DISABLE_BUF_POOL_CLEAN").value_or(""));

}

/**

* @brief Destructor to free all buffers in the pool.

*/

~ggml_cann_pool_buf_prio() {

ggml_cann_set_device(device);

for (auto & [b_ptr, b_size] : buffer_pool) {

aclrtFree(b_ptr);

pool_size -= b_size;

}

buffer_pool.clear();

GGML_ASSERT(pool_size == 0);

}

/**

* @brief Allocate a buffer of the given size.

*

* @param size The size of the buffer to allocate.

* @param actual_size A pointer to a variable to receive the actual size of

* the allocated buffer.

* @return A pointer to the allocated buffer.

*/

void * alloc(size_t size, size_t * actual_size) override {

size = GGML_PAD(size, alignment);

if (size == 0) {

size = alignment;

}

void * ptr = nullptr;

auto now = std::chrono::steady_clock::now();

std::vector<ggml_cann_buffer> free_buffers_rest;

free_buffers_rest.reserve(free_buffers.size());

while (!free_buffers.empty()) {

auto b = free_buffers.top();

free_buffers.pop();

if (b.size >= size) {

// reuse the buffer if the size is enough

const size_t margin = b.size - size;

if (margin <= max_reuse_margin) {

*actual_size = b.size;

ptr = b.ptr;

#ifdef DEBUG_CANN_MALLOC

GGML_LOG_INFO(

"cann pool[%d]: reused %p, "

"pool_size = %5u MB, "

"size = %5u MB, "

"margin = %5u MB\n",

device, b.ptr, (uint32_t) (GGML_PAD(pool_size, 1048576) / 1048576),

(uint32_t) (GGML_PAD(size, 1048576) / 1048576),

(uint32_t) (GGML_PAD(margin, 1048576) / 1048576));

#endif

break;

}

}

bool should_clean = !disable_clean && b.size > min_free_margin &&

std::chrono::duration_cast<std::chrono::milliseconds>(now - b.last_used).count() > 100;

if (should_clean) {

// free the buffer if the size is needed to be freed

ACL_CHECK(aclrtFree(b.ptr));

pool_size -= b.size;

buffer_pool.erase(b.ptr);

#ifdef DEBUG_CANN_MALLOC

GGML_LOG_INFO(

"cann pool[%d]: clean %p, "

"pool_size = %5u MB, "

"size = %5u MB\n",

device, b.ptr, (uint32_t) (GGML_PAD(pool_size, 1048576) / 1048576),

(uint32_t) (GGML_PAD(b.size, 1048576) / 1048576));

#endif

continue;

}

free_buffers_rest.push_back(b);

}

for (ggml_cann_buffer & b : free_buffers_rest) {

free_buffers.push(std::move(b));

}

#ifdef DEBUG_CANN_MALLOC

GGML_LOG_INFO("cann pool[%d] free pool_size = %5u MB\n\n", device,

(uint32_t) (GGML_PAD(pool_size, 1048576) / 1048576));

#endif

if (ptr != nullptr) {

return ptr;

}

// allocate a new buffer if no buffer can be reused

ggml_cann_set_device(device);

ACL_CHECK(aclrtMalloc(&ptr, size, ACL_MEM_MALLOC_HUGE_FIRST));

*actual_size = size;

pool_size += size;

#ifdef DEBUG_CANN_MALLOC

GGML_LOG_INFO(

"cann pool[%d]: allocate %p, "

"pool_size = %5u MB, "

"size = %5u MB\n",

device, ptr, (uint32_t) (GGML_PAD(pool_size, 1048576) / 1048576),

(uint32_t) (GGML_PAD(size, 1048576) / 1048576));

#endif

buffer_pool.emplace(ptr, size);

return ptr;

}

/**

* @brief Free a buffer and return it to the pool.

*

* @param ptr Pointer to the buffer to free.

* @param size Size of the buffer to free.

*/

void free(void * ptr, size_t size) override {

GGML_UNUSED(size);

auto it = buffer_pool.find(ptr);

if (it == buffer_pool.end()) {

GGML_ABORT("cann pool[%d]: buffer %p not found in pool\n", device, ptr);

}

auto now = std::chrono::steady_clock::now();

free_buffers.emplace(ggml_cann_buffer{ ptr, it->second, now });

#ifdef DEBUG_CANN_MALLOC

GGML_LOG_INFO(

"cann pool[%d]: return %p, "

"pool_size = %5u MB\n",

device, ptr, (uint32_t) (GGML_PAD(pool_size, 1048576) / 1048576));

#endif

}

};

/**

* @brief A pool of CANN buffers(segment buffer).

*

* This class manages a pool of CANN buffers for a specific device.

*/

struct ggml_cann_pool_buf : public ggml_cann_pool {

/**

* @brief The maximum reuse margin for a buffer.

*/

static const size_t max_reuse_margin = 1ull << 22; // 4MB

/**

* @brief The minimum free margin for a buffer.

*/

static const size_t min_free_margin = 1ull << 20; // 1MB

/**

* @brief The alignment for buffer allocation.

*/

static const size_t alignment = 128;

/**

* @brief The maximum number of buffers in the pool.

*/

static const int MAX_BUFFERS = 256;

/**

* @brief The device ID associated with this buffer pool.

*/

int device;

/**

* @brief Whether to disable clean during buffer allocation.

*/

bool disable_clean = false;

/**

* @brief Structure representing a CANN buffer.

*/

struct ggml_cann_buffer {

void * ptr = nullptr; ///< Pointer to the buffer memory.

size_t size = 0; ///< Size of the buffer.

bool used = false; ///< Whether the buffer is currently in use.

std::chrono::steady_clock::time_point last_used; ///< Last used time.

};

/**

* @brief Array of CANN buffers in the pool.

*/

ggml_cann_buffer buffer_pool[MAX_BUFFERS] = {};

/**

* @brief Total size of all buffers in the pool.

*/

size_t pool_size = 0;

/**

* @brief Constructor to initialize the buffer pool for a specific device.

*

* @param device The device ID to associate with this buffer pool.

*/

explicit ggml_cann_pool_buf(int device) : device(device) {

disable_clean = parse_bool(get_env_as_lowercase("GGML_CANN_DISABLE_BUF_POOL_CLEAN").value_or(""));

}

/**

* @brief Destructor to free all buffers in the pool.

*/

~ggml_cann_pool_buf() {

ggml_cann_set_device(device);

for (int i = 0; i < MAX_BUFFERS; ++i) {

ggml_cann_buffer & b = buffer_pool[i];

if (b.ptr != nullptr) {

aclrtFree(b.ptr);

pool_size -= b.size;

}

}

GGML_ASSERT(pool_size == 0);

}

/**

* @brief Allocate a buffer of the given size.

*

* @param size The size of the buffer to allocate.

* @param actual_size A pointer to a variable to receive the actual size of

* the allocated buffer.

* @return A pointer to the allocated buffer.

*/

void * alloc(size_t size, size_t * actual_size) override {

size = GGML_PAD(size, alignment);

if (size == 0) {

size = alignment;

}

void * ptr = nullptr;

auto now = std::chrono::steady_clock::now();

int i = 0;

for (; i < MAX_BUFFERS; ++i) {

ggml_cann_buffer & b = buffer_pool[i];

if (b.ptr == nullptr) {

break;

}

if (b.used) {

continue;

}

if (b.size >= size) {

// reuse the buffer if the size is enough

const size_t margin = b.size - size;

if (margin <= max_reuse_margin) {

*actual_size = b.size;

b.used = true;

ptr = b.ptr;

#ifdef DEBUG_CANN_MALLOC

GGML_LOG_INFO(

"cann pool[%d]: reused %p, "

"pool_size = %5u MB, "

"size = %5u MB, "

"margin = %5u MB\n",

device, b.ptr, (uint32_t) (GGML_PAD(pool_size, 1048576) / 1048576),

(uint32_t) (GGML_PAD(size, 1048576) / 1048576),

(uint32_t) (GGML_PAD(margin, 1048576) / 1048576));

#endif

break;

}

}

bool should_clean = !disable_clean && b.size > min_free_margin &&

std::chrono::duration_cast<std::chrono::milliseconds>(now - b.last_used).count() > 100;

if (should_clean) {

// free the buffer if the size is needed to be freed

ACL_CHECK(aclrtFree(b.ptr));

pool_size -= b.size;

#ifdef DEBUG_CANN_MALLOC

GGML_LOG_INFO(

"cann pool[%d]: clean %p, "

"pool_size = %5u MB, "

"size = %5u MB\n",

device, b.ptr, (uint32_t) (GGML_PAD(pool_size, 1048576) / 1048576),

(uint32_t) (GGML_PAD(b.size, 1048576) / 1048576));

#endif

b.ptr = nullptr;

}

}

if (ptr != nullptr) {

return ptr;

}

if (i < MAX_BUFFERS) {

// allocate a new buffer if no buffer can be reused

ggml_cann_buffer & b = buffer_pool[i];

ggml_cann_set_device(device);

ACL_CHECK(aclrtMalloc(&b.ptr, size, ACL_MEM_MALLOC_HUGE_FIRST));

pool_size += size;

*actual_size = size;

b.size = size;

b.used = true;

if (i >= MAX_BUFFERS - 8) {

GGML_LOG_WARN("cann pool[%d]: slots almost full\n", device);

}

#ifdef DEBUG_CANN_MALLOC

GGML_LOG_INFO(

"cann pool[%d]: allocate %p, "

"pool_size = %5u MB, "

"size = %5u MB\n",

device, b.ptr, (uint32_t) (GGML_PAD(pool_size, 1048576) / 1048576),

(uint32_t) (GGML_PAD(b.size, 1048576) / 1048576));

#endif

return b.ptr;

}

GGML_ABORT("cann pool[%d]: slots full\n", device);

}

/**

* @brief Free a buffer and return it to the pool.

*

* @param ptr Pointer to the buffer to free.

* @param size Size of the buffer to free.

*/

void free(void * ptr, size_t size) override {

GGML_UNUSED(size);

for (int i = 0; i < MAX_BUFFERS; ++i) {

ggml_cann_buffer & b = buffer_pool[i];

if (b.ptr != ptr) {

continue;

}

b.used = false;

b.last_used = std::chrono::steady_clock::now();

#ifdef DEBUG_CANN_MALLOC

GGML_LOG_INFO(

"cann pool[%d]: return %p, "

"pool_size = %5u MB\n",

device, b.ptr, (uint32_t) (GGML_PAD(pool_size, 1048576) / 1048576));

#endif

return;

}

GGML_ABORT("cann pool[%d]: slots full\n", device);

}

};

/**

* @brief A pool of CANN buffers with virtual memory.

*

* This class manages a pool of CANN buffers with virtual memory for a specific

* device.

*/

struct ggml_cann_pool_vmm : public ggml_cann_pool {

/**

* @brief The maximum size of the virtual memory pool (32 GB).

*/

size_t max_size;

/**

* @brief The device ID associated with this buffer pool.

*/

int device;

/**

* @brief Pointer to the start of the virtual memory pool.

*/

void * pool_addr = 0;

/**

* @brief Amount of virtual memory used in the pool.

*/

size_t pool_used = 0;

/**

* @brief Total size of the virtual memory pool.

*/

size_t pool_size = 0;

/**

* @brief Allocation granularity for the virtual memory pool.

*/

size_t granularity;

/**

* @brief Handles for the physical memory allocated.

*/

std::vector<aclrtDrvMemHandle> handles;

/**

* @brief Offsets for the mapped memory regions.

*/

std::vector<void *> map_offsets;

/**

* @brief Constructor to initialize the buffer pool with virtual memory for

* a specific device.

*

* @param device The device ID to associate with this buffer pool.

*/

explicit ggml_cann_pool_vmm(int device) : device(device) {

auto dev = ggml_cann_info().devices[device];

granularity = dev.vmm_granularity;

max_size = dev.total_vram;

}

/**

* @brief Destructor to free all buffers in the virtual memory pool.

*/

~ggml_cann_pool_vmm() {

if (pool_addr != 0) {

for (auto & offset : map_offsets) {

ACL_CHECK(aclrtUnmapMem(offset));

}

for (auto & handle : handles) {

ACL_CHECK(aclrtFreePhysical(handle));

}

ACL_CHECK(aclrtReleaseMemAddress(pool_addr));

}

}

/**

* @brief Allocate a buffer of the given size in the virtual memory pool.

*

* @param size The size of the buffer to allocate.

* @param actual_size A pointer to a variable to receive the actual size of

* the allocated buffer.

* @return A pointer to the allocated buffer.

*/

void * alloc(size_t size, size_t * actual_size) override {

// round up the allocation size to the alignment to ensure that all

// allocations are aligned for all data types

const size_t alignment = 128;

size = GGML_PAD(size, alignment);

if (size == 0) {

size = alignment;

}

size_t avail = pool_size - pool_used;

if (size > avail) {

// round up to the next multiple of the granularity

size_t reserve_size = size - avail;

reserve_size = GGML_PAD(reserve_size, granularity);

GGML_ASSERT(pool_size + reserve_size <= max_size);

// allocate more physical memory

aclrtPhysicalMemProp prop = {};

prop.handleType = ACL_MEM_HANDLE_TYPE_NONE;

prop.allocationType = ACL_MEM_ALLOCATION_TYPE_PINNED;

prop.memAttr = ACL_HBM_MEM_HUGE;

prop.location.type = ACL_MEM_LOCATION_TYPE_DEVICE;

prop.location.id = device;

prop.reserve = 0;

aclrtDrvMemHandle handle;

ACL_CHECK(aclrtMallocPhysical(&handle, reserve_size, &prop, 0));

// reserve virtual address space (if not already reserved)

if (pool_addr == 0) {

ACL_CHECK(aclrtReserveMemAddress(&pool_addr, max_size, 0, NULL, 1));

}

// map at the end of the pool

ACL_CHECK(aclrtMapMem((char *) pool_addr + pool_size, reserve_size, 0, handle, 0));

handles.push_back(handle);

map_offsets.push_back((char *) pool_addr + pool_size);

// add to the pool

pool_size += reserve_size;

#ifdef DEBUG_CANN_MALLOC

GGML_LOG_INFO("cann pool[%d]: size increased to %llu MB (reserved %llu MB)\n", device,

(unsigned long long) (pool_size / 1024 / 1024),

(unsigned long long) (reserve_size / 1024 / 1024));

#endif

}

GGML_ASSERT(pool_addr != 0);

void * ptr = (void *) ((char *) pool_addr + pool_used);

*actual_size = size;

pool_used += size;

#ifdef DEBUG_CANN_MALLOC

GGML_LOG_INFO("cann pool[%d]: allocated %llu bytes at %llx\n", device, (unsigned long long) size,

(unsigned long long) ptr);

#endif

return ptr;

}

/**

* @brief Free a buffer and return it to the virtual memory pool.

*

* @param ptr Pointer to the buffer to free.

* @param size Size of the buffer to free.

*/

void free(void * ptr, size_t size) override {

#ifdef DEBUG_CANN_MALLOC

GGML_LOG_INFO("cann pool[%d]: freed %llu bytes at %llx\n", device, (unsigned long long) size,

(unsigned long long) ptr);

#endif

pool_used -= size;

// all deallocations must be in reverse order of the allocations

GGML_ASSERT(ptr == (void *) ((char *) pool_addr + pool_used));

}

};

/**

* @brief Create a new CANN pool for a specific device.

*

* Factory method to create a new CANN pool object based on the device type.

*

* @param device The device ID for which to create the pool.

* @return A unique pointer to the created CANN pool.

*/

std::unique_ptr<ggml_cann_pool> ggml_backend_cann_context::new_pool_for_device(int device) {

std::string mem_pool_type = get_env_as_lowercase("GGML_CANN_MEM_POOL").value_or("");

if (mem_pool_type == "prio") {

GGML_LOG_INFO("%s: device %d use buffer pool with priority queue\n", __func__, device);

return std::unique_ptr<ggml_cann_pool>(new ggml_cann_pool_buf_prio(device));

}

if (ggml_cann_info().devices[device].vmm && mem_pool_type != "leg") {

GGML_LOG_INFO("%s: device %d use vmm pool\n", __func__, device);

return std::unique_ptr<ggml_cann_pool>(new ggml_cann_pool_vmm(device));

}

GGML_LOG_INFO("%s: device %d use buffer pool\n", __func__, device);

return std::unique_ptr<ggml_cann_pool>(new ggml_cann_pool_buf(device));

}

// cann buffer

/**

* @brief Context for managing a CANN buffer associated with a specific device.

*

* This structure holds information about a CANN buffer, including the device

* ID, device pointer, and a name derived from GGML_CANN_NAME and the device ID.

*/

struct ggml_backend_cann_buffer_context {

int32_t device; ///< The device ID associated with this buffer context.

void * dev_ptr = nullptr; ///< Pointer to the device memory allocated for the buffer.

/**

* @brief Constructor to initialize the CANN buffer context.

*

* @param device The device ID associated with this buffer context.

* @param dev_ptr Pointer to the device memory allocated for the buffer.

*/

ggml_backend_cann_buffer_context(int32_t device, void * dev_ptr) : device(device), dev_ptr(dev_ptr) {}

/**

* @brief Destructor to free the device memory allocated for the buffer.

*/

~ggml_backend_cann_buffer_context() { ACL_CHECK(aclrtFree(dev_ptr)); }

};

// cann buffer type

/**

* @brief Structure representing context information for a specific backend

* buffer type.

*/

struct ggml_backend_cann_buffer_type_context {

int32_t device; /**< Device identifier associated with the buffer context. */

std::string name; /**< Name associated with the buffer context. */

};

/**

* @brief Retrieves the name associated with a CANN buffer type.

*

* This function returns the descriptive name associated with the specified

* CANN buffer type context.

*

* @param buft Pointer to the buffer type context.

* @return Const pointer to the C-style string containing the name.

*/

static const char * ggml_backend_cann_buffer_type_name(ggml_backend_buffer_type_t buft) {

ggml_backend_cann_buffer_type_context * buft_ctx = (ggml_backend_cann_buffer_type_context *) buft->context;

return buft_ctx->name.c_str();

}

/**

* @brief Checks if the backend buffer type is associated with the CANN backend.

*

* This function checks whether the provided backend buffer type is associated

* with the CANN backend based on the comparison of its name retrieval function

* pointer.

*

* @param buft Pointer to the backend buffer type to check.

* @return bool Returns true if the buffer type is associated with the CANN

* backend, otherwise false.

*/

static bool ggml_backend_buft_is_cann(ggml_backend_buffer_type_t buft) {

return buft->iface.get_name == ggml_backend_cann_buffer_type_name;

}

/**

* @brief Free resources associated with a CANN buffer.

*

* This function frees the resources associated with a CANN buffer, including

* its context.

*

* @param buffer The CANN buffer to free.

*/

static void ggml_backend_cann_buffer_free_buffer(ggml_backend_buffer_t buffer) {

ggml_backend_cann_buffer_context * ctx = (ggml_backend_cann_buffer_context *) buffer->context;

delete ctx;

}

/**

* @brief Retrieve the base pointer of a CANN buffer.

*

* This function returns the base pointer of a CANN buffer, which points to the

* device memory allocated for the buffer.

*

* @param buffer The CANN buffer whose base pointer is to be retrieved.

* @return A pointer to the base of the device memory allocated for the buffer.

*/

static void * ggml_backend_cann_buffer_get_base(ggml_backend_buffer_t buffer) {

ggml_backend_cann_buffer_context * ctx = (ggml_backend_cann_buffer_context *) buffer->context;

return ctx->dev_ptr;

}

/**

* @brief Transform quantized Q4.0 tensor data into a format suitable for CANN

* processing.

*

* This function transforms quantized Q4.0 tensor data into a format suitable

* for CANN processing. It extracts quantization values and scales from the

* source data and prepares them in a format expected by CANN operations.

*

* @param tensor Pointer to the tensor information.

* @param src Pointer to the source data in Q4.0 format.

* @param dst Pointer to the destination buffer where transformed data will be

* stored.

*/

static void ggml_backend_cann_transform_q4_0(ggml_tensor * tensor, const void * src, void * dst) {

int64_t n_elems = ggml_nelements(tensor);

int64_t groups = n_elems / QK4_0;

size_t quant_bytes = n_elems * sizeof(uint8_t) / 2;

uint8_t * quant_offset = (uint8_t *) dst;

uint16_t * scale_offset = (uint16_t *) ((char *) dst + quant_bytes);

for (int i = 0; i < groups; i++) {

const block_q4_0 * group = (const block_q4_0 *) ((const char *) src + i * sizeof(block_q4_0));

*scale_offset = group->d;

scale_offset++;

// 0-15

for (int j = 0; j < QK4_0 / 2; j += 2) {

(*quant_offset) = (group->qs[j] & 0x0F);

(*quant_offset) |= ((group->qs[j + 1] << 4));

quant_offset++;

}

// 16-31

for (int j = 0; j < QK4_0 / 2; j += 2) {

(*quant_offset) = (group->qs[j] >> 4);

(*quant_offset) |= (group->qs[j + 1] & 0xF0);

quant_offset++;

}

}

// put (uint4b_t -8) into int4b_t

for (quant_offset = (uint8_t *) dst; quant_offset < (uint8_t *) dst + quant_bytes; quant_offset++) {

(*quant_offset) ^= 0x88;

}

}

/**

* @brief Transform CANN processed data back into quantized Q4.0 format.

*

* This function transforms CANN processed data back into quantized Q4.0 format.

* It reverses the transformation performed by

* ggml_backend_cann_transform_q4_0(), converting the data back into its

* original quantized form.

*

* @param tensor Pointer to the tensor information.

* @param src Pointer to the source buffer containing transformed data.

* @param dst Pointer to the destination buffer where the Q4.0 formatted data

* will be stored.

*/

static void ggml_backend_cann_transform_back_q4_0(const ggml_tensor * tensor, void * src, void * dst) {

int64_t n_elems = ggml_nelements(tensor);

int64_t groups = n_elems / QK4_0;

size_t quant_bytes = n_elems * sizeof(uint8_t) / 2;

uint8_t * quant_offset = (uint8_t *) src;

uint16_t * scale_offset = (uint16_t *) ((char *) src + quant_bytes);

for (; quant_offset < (uint8_t *) src + quant_bytes; quant_offset++) {

(*quant_offset) ^= 0x88;

}

quant_offset = (uint8_t *) src;

for (int i = 0; i < groups; i++) {

block_q4_0 * group = (block_q4_0 *) ((char *) dst + i * sizeof(block_q4_0));

group->d = *scale_offset;

scale_offset++;

// 0-15

for (int j = 0; j < QK4_0 / 2; j += 2) {

group->qs[j] = ((*quant_offset) & 0x0F);

group->qs[j + 1] = ((*quant_offset) >> 4);

quant_offset++;

}

// 16-31

for (int j = 0; j < QK4_0 / 2; j += 2) {

group->qs[j] |= ((*quant_offset) << 4);

group->qs[j + 1] |= ((*quant_offset) & 0xF0);

quant_offset++;

}

}

}

/**

* @brief Transform quantized Q8.0 tensor data into a format suitable for CANN

* processing.

*

* This function transforms quantized Q8.0 tensor data into a format suitable

* for CANN processing. It extracts quantization values and scales from the

* source data and prepares them in a format expected by CANN operations.

*

* @param tensor Pointer to the tensor information.

* @param src Pointer to the source data in Q8.0 format.

* @param dst Pointer to the destination buffer where transformed data will be

* stored.

*/

static void ggml_backend_cann_transform_q8_0(ggml_tensor * tensor, const void * src, void * dst) {

int64_t n_elems = ggml_nelements(tensor);

int64_t groups = n_elems / QK8_0;

size_t quant_bytes = n_elems * sizeof(uint8_t);

uint8_t * quant_offset = (uint8_t *) dst;

uint16_t * scale_offset = (uint16_t *) ((char *) dst + quant_bytes);

for (int i = 0; i < groups; i++) {

const block_q8_0 * group = (const block_q8_0 *) ((const char *) src + i * sizeof(block_q8_0));

*scale_offset = group->d;

scale_offset++;

size_t group_quant_size = QK8_0 * sizeof(uint8_t);

memcpy(quant_offset, group->qs, group_quant_size);

quant_offset += group_quant_size;

}

}

/**

* @brief Transform CANN processed data back into quantized Q8.0 format.

*

* This function transforms CANN processed data back into quantized Q8.0 format.

* It reverses the transformation performed by

* ggml_backend_cann_transform_q8_0(), converting the data back into its

* original quantized form.

*

* @param tensor Pointer to the tensor information.

* @param src Pointer to the source buffer containing transformed data.

* @param dst Pointer to the destination buffer where the Q8.0 formatted data

* will be stored.

*/

static void ggml_backend_cann_transform_back_q8_0(const ggml_tensor * tensor, const void * src, void * dst) {

int64_t n_elems = ggml_nelements(tensor);

int64_t groups = n_elems / QK8_0;

size_t quant_bytes = n_elems * sizeof(uint8_t);

const uint8_t * quant_offset = (const uint8_t *) src;

const uint16_t * scale_offset = (const uint16_t *) ((const char *) src + quant_bytes);

for (int i = 0; i < groups; i++) {

block_q8_0 * group = (block_q8_0 *) ((char *) dst + i * sizeof(block_q8_0));

group->d = *scale_offset;

scale_offset++;

size_t group_quant_size = QK8_0 * sizeof(uint8_t);

memcpy(group->qs, quant_offset, group_quant_size);

quant_offset += group_quant_size;

}

}

/**

* @brief Transform tensor data based on its type for CANN processing.

*

* This function transforms tensor data based on its quantization type for CANN

* processing. It dispatches the transformation based on the tensor's type to

* specialized functions handling Q4.0 and Q8.0 formats.

*

* @param tensor Pointer to the tensor information.

* @param src Pointer to the source data to be transformed.

* @param dst Pointer to the destination buffer where transformed data will be

* stored.

*/

static void ggml_backend_cann_transform(ggml_tensor * tensor, const void * src, void * dst) {

switch (tensor->type) {

case GGML_TYPE_Q4_0:

ggml_backend_cann_transform_q4_0(tensor, src, dst);

break;

case GGML_TYPE_Q8_0:

ggml_backend_cann_transform_q8_0(tensor, src, dst);

break;

default:

break;

}

}

/**

* @brief Transform CANN processed data back into tensor data based on its type.

*

* This function transforms CANN processed data back into tensor data based on

* its quantization type for Q4.0 and Q8.0 formats. It dispatches the

* transformation based on the tensor's type to specialized functions.

*

* @param tensor Pointer to the tensor information.

* @param src Pointer to the source data containing CANN processed data.

* @param dst Pointer to the destination buffer where transformed tensor data

* will be stored.

*/

static void ggml_backend_cann_transform_back(const ggml_tensor * tensor, void * src, void * dst) {

switch (tensor->type) {

case GGML_TYPE_Q4_0:

ggml_backend_cann_transform_back_q4_0(tensor, src, dst);

break;

case GGML_TYPE_Q8_0:

ggml_backend_cann_transform_back_q8_0(tensor, src, dst);

break;

default:

break;

}

}

/**

* @brief Check if transformation is needed for a given tensor type.

*

* This function checks if transformation is needed for a given tensor type

* to prepare data for CANN processing.

*

* @param type The tensor type to check.

* @return true if transformation is needed, false otherwise.

*/

static bool need_transform(ggml_type type) {

switch (type) {

case GGML_TYPE_Q4_0:

case GGML_TYPE_Q8_0:

return true;

default:

return false;

}

}

/**

* @brief Initialize a tensor using data from a CANN buffer.

*

* This function initializes a tensor using data from a CANN buffer.

* It handles special cases such as views and quantization.

*

* @param buffer The CANN buffer from which to initialize the tensor.

* @param tensor Pointer to the tensor to be initialized.

*/

static enum ggml_status ggml_backend_cann_buffer_init_tensor(ggml_backend_buffer_t buffer, ggml_tensor * tensor) {

if (tensor->view_src != NULL && tensor->view_offs == 0) {

GGML_ASSERT(tensor->view_src->buffer->buft == buffer->buft);

return GGML_STATUS_SUCCESS;

}

// TODO: cann backend doesn't support quantized yet. Just leave the code

// here.

if (ggml_is_quantized(tensor->type)) {

// Initialize padding to 0 to avoid possible NaN values

size_t original_size = ggml_nbytes(tensor);

size_t padded_size = ggml_backend_buft_get_alloc_size(buffer->buft, tensor);

if (padded_size > original_size && tensor->view_src == nullptr) {

size_t memset_size = padded_size - original_size;

ACL_CHECK(aclrtMemset((char *) tensor->data + original_size, memset_size, 0, memset_size));

}

}

return GGML_STATUS_SUCCESS;

}

/**

* @brief Workspace for caching NZ buffers per device.

*

* This struct manages a device buffer used in NZ computations. It supports

* allocation, reallocation, and clearing of cached memory. The struct is

* designed to be used with a global array, one per device.

*/

struct ggml_cann_nz_workspace {

void * ptr; // Pointer to allocated device buffer

size_t allocated; // Size of currently allocated buffer in bytes

/**

* @brief Constructor. Initializes the workspace with no allocated memory.

*/

ggml_cann_nz_workspace() : ptr(nullptr), allocated(0) {}

/**

* @brief Free cached memory and reset the workspace.

*

* If a buffer has been allocated, this function releases it using

* aclrtFree and resets internal state.

*/

void clear() {

if (ptr) {

ACL_CHECK(aclrtFree(ptr));

ptr = nullptr;

allocated = 0;

}

}

/**

* @brief Allocate or reallocate the workspace buffer.

*

* If the requested size is larger than the currently allocated size,

* the old buffer will be freed and a new buffer of the requested size

* will be allocated on the device.

*

* @param new_size Size in bytes to allocate for the workspace.

*/

void realloc(size_t new_size) {

if (new_size > allocated) {

clear();

ACL_CHECK(aclrtMalloc(&ptr, new_size, ACL_MEM_MALLOC_HUGE_FIRST));

allocated = new_size;

}

}

/**

* @brief Get the device buffer pointer.

*

* @return Pointer to the allocated buffer, or nullptr if not allocated.

*/

void * get() const { return ptr; }

};

/**

* @brief Global array of NZ workspaces, one per device.

*/

static ggml_cann_nz_workspace g_nz_workspaces[GGML_CANN_MAX_DEVICES];

/**

* @brief Convert tensor weights to NZ format using Ascend CANN API.

*

* This function creates a transposed tensor descriptor and performs the

* TransMatmulWeight operation. Converting tensor formats can significantly

* improve performance on certain hardware.

*

* @param tensor Pointer to the input ggml_tensor containing the weights.

* @param offset Byte offset within the tensor data buffer where weights start.

* @param device device id.

*

* @note The workspace buffer used in this function is managed globally and reused

* across calls. This reduces overhead from repeated memory allocation and deallocation.

*/

static void weight_format_to_nz(ggml_tensor * tensor, size_t offset, int device) {

acl_tensor_ptr weightTransposed = ggml_cann_create_tensor(tensor, tensor->ne, tensor->nb, 2, ACL_FORMAT_ND, offset);

uint64_t workspaceSize = 0;

aclOpExecutor * executor;

// TransMatmulWeight

ACL_CHECK(aclnnTransMatmulWeightGetWorkspaceSize(weightTransposed.get(), &workspaceSize, &executor));

// Avoid frequent malloc/free of the workspace.

g_nz_workspaces[device].realloc(workspaceSize);

void * g_nz_workspace = g_nz_workspaces[device].get();

ACL_CHECK(aclnnTransMatmulWeight(g_nz_workspace, workspaceSize, executor, nullptr));

}

// TODO: need handle tensor which has paddings.

/**

* @brief Set tensor data in a CANN buffer.

*

* This function sets tensor data in a CANN buffer, handling transformations

* if needed based on the tensor's type.

*

* @param buffer The CANN buffer where the tensor data will be set.

* @param tensor Pointer to the tensor whose data will be set.

* @param data Pointer to the source data to be copied into the tensor.

* @param offset Offset in the source data from where to start copying.

* @param size Size of the data to be copied, in bytes.

*/

static void ggml_backend_cann_buffer_set_tensor(ggml_backend_buffer_t buffer,

ggml_tensor * tensor,

const void * data,

size_t offset,

size_t size) {

ggml_backend_cann_buffer_context * ctx = (ggml_backend_cann_buffer_context *) buffer->context;

ggml_cann_set_device(ctx->device);

// TODO: refer to cann(#6017), it use thread's default stream.

// For acl, synchronous functions use this default stream.

// Why aclrtSynchronizeDevice?

// Only check env once.

static bool weight_to_nz = parse_bool(get_env_as_lowercase("GGML_CANN_WEIGHT_NZ").value_or("on"));

if (!need_transform(tensor->type)) {

ACL_CHECK(aclrtMemcpy((char *) tensor->data + offset, size, data, size, ACL_MEMCPY_HOST_TO_DEVICE));

if (weight_to_nz && is_matmul_weight((const ggml_tensor *) tensor)) {

GGML_ASSERT(tensor->ne[2] == 1);

GGML_ASSERT(tensor->ne[3] == 1);

weight_format_to_nz(tensor, offset, ctx->device);

}

} else {

void * transform_buffer = malloc(size);

ggml_backend_cann_transform(tensor, data, transform_buffer);

ACL_CHECK(aclrtMemcpy((char *) tensor->data + offset, size, transform_buffer, size, ACL_MEMCPY_HOST_TO_DEVICE));

free(transform_buffer);

}

}

/**

* @brief Get tensor data from a CANN buffer.

*

* This function retrieves tensor data from a CANN buffer, handling

* transformations if needed based on the tensor's type.

*

* @param buffer The CANN buffer from which to retrieve tensor data.

* @param tensor Pointer to the tensor whose data will be retrieved.

* @param data Pointer to the destination buffer where the tensor data will be

* copied.

* @param offset Offset in the destination buffer where to start copying.

* @param size Size of the data to be copied, in bytes.

*/

static void ggml_backend_cann_buffer_get_tensor(ggml_backend_buffer_t buffer,

const ggml_tensor * tensor,

void * data,

size_t offset,

size_t size) {

ggml_backend_cann_buffer_context * ctx = (ggml_backend_cann_buffer_context *) buffer->context;

ggml_cann_set_device(ctx->device);

if (!need_transform(tensor->type)) {

ACL_CHECK(aclrtMemcpy(data, size, (char *) tensor->data + offset, size, ACL_MEMCPY_DEVICE_TO_HOST));

} else {

void * transform_buffer = malloc(size);

ACL_CHECK(aclrtMemcpy(transform_buffer, size, (char *) tensor->data + offset, size, ACL_MEMCPY_DEVICE_TO_HOST));

ggml_backend_cann_transform_back(tensor, transform_buffer, data);

free(transform_buffer);

}

}

/**

* @brief Copy tensor data between CANN buffers if possible.

*

* This function copies tensor data between CANN buffers if the source and

* destination buffers are CANN buffers and they meet the necessary conditions

* (same device or devices can access each other).

*

* @param buffer The destination CANN buffer where the tensor data will be

* copied.

* @param src Pointer to the source tensor whose data will be copied.

* @param dst Pointer to the destination tensor where the data will be copied.

* @return true if the copy operation succeeded, false otherwise.

*/

static bool ggml_backend_cann_buffer_cpy_tensor(ggml_backend_buffer_t buffer,

const ggml_tensor * src,

ggml_tensor * dst) {

if (ggml_backend_buft_is_cann(src->buffer->buft)) {

ggml_backend_cann_buffer_context * src_ctx = (ggml_backend_cann_buffer_context *) src->buffer->context;

ggml_backend_cann_buffer_context * dst_ctx = (ggml_backend_cann_buffer_context *) buffer->context;

size_t memcpy_size = ggml_nbytes(src);

// Same device.

if (src_ctx->device == dst_ctx->device) {

ACL_CHECK(aclrtMemcpy((char *) dst->data, memcpy_size, (const char *) src->data, memcpy_size,

ACL_MEMCPY_DEVICE_TO_DEVICE));

return true;

} else {

#ifdef ASCEND_310P

// TODO: Support 310p P2P copy

return false;

#endif

// Different device but can access by peer.

int32_t canAccessPeer = 0;

ACL_CHECK(aclrtDeviceCanAccessPeer(&canAccessPeer, src_ctx->device, dst_ctx->device));

if (canAccessPeer) {

ggml_cann_set_device(src_ctx->device);

ACL_CHECK(aclrtDeviceEnablePeerAccess(dst_ctx->device, 0));

ACL_CHECK(aclrtMemcpy((char *) dst->data, memcpy_size, (const char *) src->data, memcpy_size,

ACL_MEMCPY_DEVICE_TO_DEVICE));

return true;

}

}

}

return false;

}

/**

* @brief Clear a CANN buffer by setting all its memory to a specified value.

*

* This function clears a CANN buffer by setting all its memory to a specified

* value.

*

* @param buffer The CANN buffer to be cleared.

* @param value The value to which each byte in the buffer will be set.

*/

static void ggml_backend_cann_buffer_clear(ggml_backend_buffer_t buffer, uint8_t value) {

ggml_backend_cann_buffer_context * ctx = (ggml_backend_cann_buffer_context *) buffer->context;

ggml_cann_set_device(ctx->device);

ACL_CHECK(aclrtMemset(ctx->dev_ptr, buffer->size, value, buffer->size));

}

/**

* @brief Interface for a CANN buffer in the backend.

*

* This structure defines function pointers to operations that can be performed

* on a CANN buffer within the backend.

*/

static const ggml_backend_buffer_i ggml_backend_cann_buffer_interface = {

/* .free_buffer = */ ggml_backend_cann_buffer_free_buffer,

/* .get_base = */ ggml_backend_cann_buffer_get_base,

/* .init_tensor = */ ggml_backend_cann_buffer_init_tensor,

/* .memset_tensor = */ NULL,

/* .set_tensor = */ ggml_backend_cann_buffer_set_tensor,

/* .get_tensor = */ ggml_backend_cann_buffer_get_tensor,

/* .cpy_tensor = */ ggml_backend_cann_buffer_cpy_tensor,

/* .clear = */ ggml_backend_cann_buffer_clear,

/* .reset = */ NULL,

};

/**

* @brief Allocates a new CANN buffer of the specified type and size.

*

* This function allocates a new CANN buffer on the specified device with the

* given size.

*

* @param buft Pointer to the buffer type context.

* @param size Size in bytes of the buffer to allocate.

* @return Pointer to the allocated buffer, or nullptr if allocation fails.

*/

static ggml_backend_buffer_t ggml_backend_cann_buffer_type_alloc_buffer(ggml_backend_buffer_type_t buft, size_t size) {

ggml_backend_cann_buffer_type_context * buft_ctx = (ggml_backend_cann_buffer_type_context *) buft->context;

ggml_cann_set_device(buft_ctx->device);

const size_t alignment = 128;

size = GGML_PAD(size, alignment);

if (size == 0) {

size = alignment;

}

void * dev_ptr;

aclError err = aclrtMalloc(&dev_ptr, size, ACL_MEM_MALLOC_HUGE_FIRST);

if (err != ACL_SUCCESS) {

GGML_LOG_ERROR("%s: allocating %.2f MiB on device %d: aclrtMalloc failed: %s\n", __func__,

size / 1024.0 / 1024.0, buft_ctx->device, aclGetRecentErrMsg());

return nullptr;

}

ggml_backend_cann_buffer_context * ctx = new ggml_backend_cann_buffer_context(buft_ctx->device, dev_ptr);

return ggml_backend_buffer_init(buft, ggml_backend_cann_buffer_interface, ctx, size);

}

/**

* @brief Retrieves the memory alignment requirement for CANN buffers of this

* type.

*

* This function returns the alignment requirement in bytes for memory allocated

* by the CANN buffer type.

*

* @param buft Pointer to the buffer type context (unused in this

* implementation).

* @return The alignment requirement in bytes (fixed at 128 bytes for CANN

* buffers).

*/

static size_t ggml_backend_cann_buffer_type_get_alignment(ggml_backend_buffer_type_t buft) {

return 128;

GGML_UNUSED(buft);

}

/**

* @brief Calculates the allocation size required for a tensor in a CANN buffer.

*

* Computes the total allocation size needed for storing the tensor's data in a

* CANN buffer, considering any necessary padding or adjustments for quantized

* types.

*

* @param buft Pointer to the buffer type context (unused in this

* implementation).

* @param tensor Pointer to the tensor for which the allocation size is

* calculated.

* @return The total allocation size in bytes required for the tensor in the

* CANN buffer.

*/

static size_t ggml_backend_cann_buffer_type_get_alloc_size(ggml_backend_buffer_type_t buft,

const ggml_tensor * tensor) {

size_t size = ggml_nbytes(tensor);

int64_t ne0 = tensor->ne[0];

// Only check env once.

static bool weight_to_nz = parse_bool(get_env_as_lowercase("GGML_CANN_WEIGHT_NZ").value_or("on"));

// last line must bigger than 32, because every single op deal at

// least 32 bytes.

// TODO: quantized type?

// int64_t line_size = ne0 * ggml_element_size(tensor);

// int64_t line_size_align_32 = (line_size + 31) & ~31;

// size += (line_size_align_32 - line_size);

if (ggml_is_quantized(tensor->type)) {

if (ne0 % MATRIX_ROW_PADDING != 0) {

size += ggml_row_size(tensor->type, MATRIX_ROW_PADDING - ne0 % MATRIX_ROW_PADDING);

}

} else if (weight_to_nz && is_matmul_weight((const ggml_tensor *) tensor)) {

// NZ format weight are not support quantized yet.

// If ND tensor transform to NZ, size may changed.

int64_t shape[] = { tensor->ne[1], tensor->ne[0] };

GGML_ASSERT(tensor->ne[2] == 1);

GGML_ASSERT(tensor->ne[3] == 1);

const aclIntArray * acl_shape = aclCreateIntArray(shape, 2);

size_t new_size;

ACL_CHECK(aclnnCalculateMatmulWeightSizeV2(acl_shape, ggml_cann_type_mapping(tensor->type), &new_size));

ACL_CHECK(aclDestroyIntArray(acl_shape));

size = std::max(size, new_size);

}

return size;

GGML_UNUSED(buft);

}

static bool ggml_backend_cann_buffer_type_is_host(ggml_backend_buffer_type_t buft) {

return false;

GGML_UNUSED(buft);

}

/**

* @brief Interface for managing CANN buffer types in the GGML backend.

*

* Provides function pointers for allocating, querying properties, and managing

* memory for CANN buffer types in the GGML backend.

*/

static const ggml_backend_buffer_type_i ggml_backend_cann_buffer_type_interface = {

/* .get_name = */ ggml_backend_cann_buffer_type_name,

/* .alloc_buffer = */ ggml_backend_cann_buffer_type_alloc_buffer,

/* .get_alignment = */ ggml_backend_cann_buffer_type_get_alignment,

/* .get_max_size = */ NULL, // defaults to SIZE_MAX

/* .get_alloc_size = */ ggml_backend_cann_buffer_type_get_alloc_size,

/* .is_host = */ ggml_backend_cann_buffer_type_is_host,

};

/**

* @brief Retrieves the CANN buffer type for a specified device.

*

* This function initializes and returns the buffer type interface associated

* with the given device. It ensures thread-safe access using a mutex.

*

* @param device The device index for which to retrieve the buffer type.

* @return A pointer to the buffer type interface for the specified device, or

* nullptr if the device index is out of range.

*/

ggml_backend_buffer_type_t ggml_backend_cann_buffer_type(int32_t device) {

static std::mutex mutex;

std::lock_guard<std::mutex> lock(mutex);

if (device >= ggml_backend_cann_get_device_count()) {

return nullptr;

}

static ggml_backend_buffer_type ggml_backend_cann_buffer_types[GGML_CANN_MAX_DEVICES];

static bool ggml_backend_cann_buffer_type_initialized = false;

if (!ggml_backend_cann_buffer_type_initialized) {

for (int32_t i = 0; i < ggml_cann_info().device_count; i++) {

ggml_backend_cann_buffer_types[i] = {

/* .iface = */ ggml_backend_cann_buffer_type_interface,

/* .device = */ ggml_backend_reg_dev_get(ggml_backend_cann_reg(), i),

/* .context = */

new ggml_backend_cann_buffer_type_context{ i, "CANN" + std::to_string(i) },

};

}

ggml_backend_cann_buffer_type_initialized = true;

}

return &ggml_backend_cann_buffer_types[device];

}

/**

* @brief Retrieves the name associated with a CANN host buffer type.

*

* This function returns the descriptive name associated with the specified

* CANN host buffer type context.

*

* @param buft Pointer to the host buffer type context.

* @return Const pointer to the C-style string containing the name.

*/

static const char * ggml_backend_cann_host_buffer_type_name(ggml_backend_buffer_type_t buft) {

return "CANN_Host";

GGML_UNUSED(buft);

}

/**

* @brief Retrieves the name associated with a CANN host buffer.

*

* This function returns the descriptive name associated with the specified

* CANN host buffer context.

*

* @param buft Pointer to the host buffer context.

* @return Const pointer to the C-style string containing the name.

*/

static const char * ggml_backend_cann_host_buffer_name(ggml_backend_buffer_t buffer) {

return "CANN_Host";

GGML_UNUSED(buffer);

}

/**

* @brief Free resources associated with a CANN host buffer.

*

* This function frees the resources associated with a CANN host buffer, including

* its context.

*

* @param buffer The CANN host buffer to free.

*/

static void ggml_backend_cann_host_buffer_free(ggml_backend_buffer_t buffer) {

ACL_CHECK(aclrtFreeHost(buffer->context));

}

/**

* @brief Allocates a new CANN host buffer of the specified size.

*

* This function allocates a new CANN host buffer with the given size.

* @param size Size in bytes of the host buffer to allocate.

* @return Pointer to the allocated host buffer, or nullptr if allocation fails.

*/

static void * ggml_cann_host_malloc(size_t size) {

if (getenv("GGML_CANN_NO_PINNED") != nullptr) {

return nullptr;

}

const size_t alignment = 128;

size = GGML_PAD(size, alignment);

if (size == 0) {

size = alignment;

}

void * hostPtr = nullptr;

aclError err = aclrtMallocHost((void **) &hostPtr, size);

if (err != ACL_SUCCESS) {

GGML_LOG_WARN("%s: failed to allocate %.2f MiB of pinned memory: %s\n", __func__, size / 1024.0 / 1024.0,

aclGetRecentErrMsg());

return nullptr;

}

return hostPtr;

}

/**

* @brief Allocates a new CANN host buffer of the specified type and size.

*

* @param buft Pointer to the host buffer type context.

* @param size Size in bytes of the host buffer to allocate.

* @return Pointer to the allocated host buffer, or CPU buffer pointer if allocation fails.

*/

static ggml_backend_buffer_t ggml_backend_cann_host_buffer_type_alloc_buffer(ggml_backend_buffer_type_t buft,

size_t size) {

void * hostPtr = ggml_cann_host_malloc(size);

if (hostPtr == nullptr) {

// fallback to cpu buffer

return ggml_backend_buft_alloc_buffer(ggml_backend_cpu_buffer_type(), size);

}

ggml_backend_buffer_t buffer = ggml_backend_cpu_buffer_from_ptr(hostPtr, size);

buffer->buft = buft;

buffer->iface.free_buffer = ggml_backend_cann_host_buffer_free;

return buffer;

}

/**

* @brief Interface for managing CANN host buffer types in the GGML backend.

*

* Provides function pointers for allocating, querying properties, and managing

* memory for CANN buffer types in the GGML backend.

*/

ggml_backend_buffer_type_t ggml_backend_cann_host_buffer_type() {

static struct ggml_backend_buffer_type ggml_backend_cann_buffer_type_host = {

/* .iface = */ {

/* .get_name = */ ggml_backend_cann_host_buffer_type_name,

/* .alloc_buffer = */ ggml_backend_cann_host_buffer_type_alloc_buffer,

/* .get_alignment = */ ggml_backend_cpu_buffer_type()->iface.get_alignment,

/* .get_max_size = */ NULL, // defaults to SIZE_MAX

/* .get_alloc_size = */ ggml_backend_cpu_buffer_type()->iface.get_alloc_size,

/* .is_host = */ ggml_backend_cpu_buffer_type()->iface.is_host,

},

/* .device = */

ggml_backend_reg_dev_get(ggml_backend_cann_reg(), 0),

/* .context = */ nullptr,

};

return &ggml_backend_cann_buffer_type_host;

}

/**

* @brief Computes the forward operation for a given tensor using CANN

* operations.

*

* This function selects the appropriate CANN operation based on the type of

* operation specified in the tensor and performs the computation.

*

* @param ctx The CANN context containing necessary resources and

* configurations.

* @param dst The destination tensor where the result of the computation will be

* stored.

* @return true if the computation was successful; false otherwise.

*/

static bool ggml_cann_compute_forward(ggml_backend_cann_context & ctx, struct ggml_tensor * dst) {

switch (dst->op) {

case GGML_OP_REPEAT:

ggml_cann_repeat(ctx, dst);

break;

case GGML_OP_GET_ROWS:

ggml_cann_get_rows(ctx, dst);

break;

case GGML_OP_SET_ROWS:

ggml_cann_set_rows(ctx, dst);

break;

case GGML_OP_DUP:

ggml_cann_dup(ctx, dst);

break;

case GGML_OP_ADD:

case GGML_OP_ADD1:

ggml_cann_binary_op<aclnn_add>(ctx, dst);

break;

case GGML_OP_SUB:

ggml_cann_binary_op<aclnn_sub>(ctx, dst);

break;

case GGML_OP_ACC:

ggml_cann_acc(ctx, dst);

break;

case GGML_OP_MUL:

ggml_cann_binary_op<aclnn_mul>(ctx, dst);

break;

case GGML_OP_DIV:

ggml_cann_binary_op<aclnn_div>(ctx, dst);

break;

case GGML_OP_UNARY:

switch (ggml_get_unary_op(dst)) {

case GGML_UNARY_OP_ABS:

GGML_CANN_CALL_OP_UNARY(Abs);

break;

case GGML_UNARY_OP_NEG:

GGML_CANN_CALL_OP_UNARY(Neg);

break;

case GGML_UNARY_OP_GELU:

case GGML_UNARY_OP_GELU_ERF:

// aclnnGelu internally uses the erf-based approximation.

GGML_CANN_CALL_OP_UNARY(Gelu);

break;

case GGML_UNARY_OP_SILU:

GGML_CANN_CALL_OP_UNARY(Silu);

break;

case GGML_UNARY_OP_GELU_QUICK:

{

auto lambda = [](ggml_backend_cann_context & ctx, aclTensor * acl_src, aclTensor * acl_dst) {

GGML_CANN_CALL_ACLNN_OP(ctx, GeluV2, acl_src, 0, acl_dst);

};

ggml_cann_op_unary(lambda, ctx, dst);

}

break;

case GGML_UNARY_OP_TANH:

GGML_CANN_CALL_OP_UNARY(Tanh);

break;

case GGML_UNARY_OP_RELU:

GGML_CANN_CALL_OP_UNARY(Relu);

break;

case GGML_UNARY_OP_SIGMOID:

GGML_CANN_CALL_OP_UNARY(Sigmoid);

break;

case GGML_UNARY_OP_HARDSIGMOID:

GGML_CANN_CALL_OP_UNARY(Hardsigmoid);

break;

case GGML_UNARY_OP_HARDSWISH:

GGML_CANN_CALL_OP_UNARY(Hardswish);

break;

case GGML_UNARY_OP_EXP:

GGML_CANN_CALL_OP_UNARY(Exp);

break;

case GGML_UNARY_OP_ELU:

ggml_cann_elu(ctx, dst);

break;

case GGML_UNARY_OP_SGN:

GGML_CANN_CALL_OP_UNARY(Sign);

break;

case GGML_UNARY_OP_STEP:

ggml_cann_step(ctx, dst);

break;

default:

return false;

}

break;

case GGML_OP_GLU:

switch (ggml_get_glu_op(dst)) {

case GGML_GLU_OP_REGLU:

GGML_CANN_CALL_OP_UNARY_GATED(Relu);

break;

case GGML_GLU_OP_GEGLU:

case GGML_GLU_OP_GEGLU_ERF:

// aclnnGelu internally uses the erf-based approximation.

GGML_CANN_CALL_OP_UNARY_GATED(Gelu);

break;

case GGML_GLU_OP_SWIGLU:

GGML_CANN_CALL_OP_UNARY_GATED(Silu);

break;

case GGML_GLU_OP_GEGLU_QUICK:

{

auto lambda = [](ggml_backend_cann_context & ctx, aclTensor * acl_src, aclTensor * acl_dst) {

GGML_CANN_CALL_ACLNN_OP(ctx, GeluV2, acl_src, 0, acl_dst);

};

ggml_cann_op_unary_gated(lambda, ctx, dst);

}

break;

default:

return false;

}

break;

case GGML_OP_NORM:

ggml_cann_norm(ctx, dst);

break;

case GGML_OP_GROUP_NORM:

ggml_cann_group_norm(ctx, dst);

break;

case GGML_OP_L2_NORM:

ggml_cann_l2_norm(ctx, dst);

break;

case GGML_OP_CROSS_ENTROPY_LOSS:

ggml_cann_cross_entropy_loss(ctx, dst);

break;

case GGML_OP_CONCAT:

ggml_cann_concat(ctx, dst);

break;

case GGML_OP_UPSCALE:

ggml_cann_upsample_nearest2d(ctx, dst);

break;

case GGML_OP_PAD:

ggml_cann_pad(ctx, dst);

break;

case GGML_OP_ARANGE:

ggml_cann_arange(ctx, dst);

break;

case GGML_OP_TIMESTEP_EMBEDDING:

ggml_cann_timestep_embedding(ctx, dst);

break;

case GGML_OP_LEAKY_RELU:

ggml_cann_leaky_relu(ctx, dst);

break;

case GGML_OP_RMS_NORM:

ggml_cann_rms_norm(ctx, dst);

break;

case GGML_OP_MUL_MAT:

ggml_cann_mul_mat(ctx, dst);

break;

case GGML_OP_MUL_MAT_ID:

ggml_cann_mul_mat_id(ctx, dst);

break;

case GGML_OP_SCALE:

ggml_cann_scale(ctx, dst);

break;

case GGML_OP_SQR:

GGML_ASSERT(dst->src[1] == nullptr);

dst->src[1] = dst->src[0];

ggml_cann_binary_op<aclnn_mul>(ctx, dst);

break;

case GGML_OP_SQRT:

GGML_CANN_CALL_OP_UNARY(Sqrt);

break;

case GGML_OP_CLAMP:

ggml_cann_clamp(ctx, dst);

break;

case GGML_OP_CPY:

ggml_cann_cpy(ctx, dst);

break;

case GGML_OP_CONT:

ggml_cann_dup(ctx, dst);

break;

case GGML_OP_NONE:

case GGML_OP_RESHAPE:

case GGML_OP_VIEW:

case GGML_OP_PERMUTE:

case GGML_OP_TRANSPOSE:

break;

case GGML_OP_DIAG_MASK_INF:

ggml_cann_diag_mask(ctx, dst, -INFINITY);

break;

case GGML_OP_SOFT_MAX:

ggml_cann_softmax(ctx, dst);

break;

case GGML_OP_ROPE:

ggml_cann_rope(ctx, dst);

break;

case GGML_OP_IM2COL:

ggml_cann_im2col(ctx, dst);

break;

case GGML_OP_POOL_2D:

ggml_cann_pool2d(ctx, dst);

break;

case GGML_OP_SUM:

ggml_cann_sum(ctx, dst);

break;

case GGML_OP_SUM_ROWS:

ggml_cann_sum_rows(ctx, dst);

break;

case GGML_OP_ARGSORT:

ggml_cann_argsort(ctx, dst);

break;

case GGML_OP_ARGMAX:

ggml_cann_argmax(ctx, dst);

break;

case GGML_OP_COS:

ggml_cann_op_unary<aclnn_cos>(ctx, dst);

break;

case GGML_OP_SIN:

ggml_cann_op_unary<aclnn_sin>(ctx, dst);

break;

case GGML_OP_CONV_TRANSPOSE_1D:

ggml_cann_conv_transpose_1d(ctx, dst);

break;

case GGML_OP_LOG:

GGML_CANN_CALL_OP_UNARY(Log);

break;

case GGML_OP_MEAN:

ggml_cann_mean(ctx, dst);

break;

case GGML_OP_PAD_REFLECT_1D:

ggml_cann_pad_reflect_1d(ctx, dst);

break;

case GGML_OP_COUNT_EQUAL:

ggml_cann_count_equal(ctx, dst);

break;

case GGML_OP_FLASH_ATTN_EXT:

ggml_cann_flash_attn_ext(ctx, dst);

break;

case GGML_OP_OUT_PROD:

ggml_cann_out_prod(ctx, dst);

break;

case GGML_OP_GATED_LINEAR_ATTN:

ggml_cann_gated_linear_attn(ctx, dst);

break;

case GGML_OP_SSM_CONV:

ggml_cann_ssm_conv(ctx, dst);

break;

default:

return false;

}

return true;

}

// backend

/**

* @brief Retrieves the name associated with the CANN backend.

*

* This function returns the name assigned to the CANN backend, which is stored

* in the context of the provided backend structure.

*

* @param backend Pointer to the CANN backend structure.

* @return A pointer to a constant string representing the backend name.

*/

static const char * ggml_backend_cann_name(ggml_backend_t backend) {

ggml_backend_cann_context * cann_ctx = (ggml_backend_cann_context *) backend->context;

return cann_ctx->name.c_str();

}

/**

* @brief Frees resources associated with the CANN backend.

*

* This function releases resources associated with the CANN backend context

* and resets the device associated with the backend to its initial state.

*

* @param backend Pointer to the CANN backend structure to be freed.

*/

static void ggml_backend_cann_free(ggml_backend_t backend) {

ggml_backend_cann_context * cann_ctx = (ggml_backend_cann_context *) backend->context;

ACL_CHECK(aclrtSynchronizeDevice());

ACL_CHECK(aclrtResetDevice(cann_ctx->device));

delete cann_ctx;

delete backend;

}

/**

* @brief Sets tensor data asynchronously in the CANN backend.

*

* This function asynchronously sets tensor data in the CANN backend.

*

* @param backend Pointer to the CANN backend structure.

* @param tensor Pointer to the tensor structure to set data for.

* @param data Pointer to the host data to copy to the tensor.

* @param offset Offset in bytes within the host data.

* @param size Size of the data to copy in bytes.

*/

static void ggml_backend_cann_set_tensor_async(ggml_backend_t backend,

ggml_tensor * tensor,

const void * data,

size_t offset,

size_t size) {

ggml_backend_cann_context * cann_ctx = (ggml_backend_cann_context *) backend->context;

ggml_backend_buffer_t buf = tensor->view_src ? tensor->view_src->buffer : tensor->buffer;

GGML_ASSERT(buf->buft == ggml_backend_cann_buffer_type(cann_ctx->device) && "unsupported buffer type");

GGML_ASSERT(!ggml_is_quantized(tensor->type));

ACL_CHECK(aclrtMemcpyAsync((char *) tensor->data + offset, size, data, size, ACL_MEMCPY_HOST_TO_DEVICE,

cann_ctx->stream()));

}

/**

* @brief Gets tensor data asynchronously in the CANN backend.

*

* This function asynchronously gets tensor data in the CANN backend.

*

* @param backend Pointer to the CANN backend structure.

* @param tensor Pointer to the tensor structure to get data from.

* @param data Pointer to the host data to copy from the tensor.

* @param offset Offset in bytes within the host data.

* @param size Size of the data to copy in bytes.

*/

static void ggml_backend_cann_get_tensor_async(ggml_backend_t backend,

const ggml_tensor * tensor,

void * data,

size_t offset,

size_t size) {

ggml_backend_cann_context * cann_ctx = (ggml_backend_cann_context *) backend->context;

ggml_backend_buffer_t buf = tensor->view_src ? tensor->view_src->buffer : tensor->buffer;

GGML_ASSERT(buf->buft == ggml_backend_cann_buffer_type(cann_ctx->device) && "unsupported buffer type");

GGML_ASSERT(!ggml_is_quantized(tensor->type));

ACL_CHECK(aclrtMemcpyAsync(data, size, (char *) tensor->data + offset, size, ACL_MEMCPY_DEVICE_TO_HOST,

cann_ctx->stream()));

}

/**

* @brief Asynchronously copies tensor data between CANN backends.

*

* This function copies tensor data asynchronously between two CANN backends. It

* checks if both tensors reside in CANN buffers and whether the devices support

* peer-to-peer access for direct copying. If not, it returns false.

*

* @param backend_src Pointer to the source CANN backend structure.

* @param backend_dst Pointer to the destination CANN backend structure.

* @param src Pointer to the source tensor to copy data from.

* @param dst Pointer to the destination tensor to copy data to.

* @return true if the copy operation succeeds, false otherwise.

*/

static bool ggml_backend_cann_cpy_tensor_async(ggml_backend_t backend_src,

ggml_backend_t backend_dst,

const ggml_tensor * src,

ggml_tensor * dst) {

GGML_ASSERT(ggml_backend_is_cann(backend_src) || ggml_backend_is_cann(backend_dst));

GGML_ASSERT(!is_matmul_weight((const ggml_tensor *) src));

if (!ggml_backend_buft_is_cann(src->buffer->buft) || !ggml_backend_buft_is_cann(dst->buffer->buft)) {

return false;

}

ggml_backend_buffer_t buf_src = src->view_src ? src->view_src->buffer : src->buffer;

ggml_backend_buffer_t buf_dst = dst->view_src ? dst->view_src->buffer : dst->buffer;

ggml_backend_cann_context * cann_ctx_src = (ggml_backend_cann_context *) backend_src->context;

ggml_backend_cann_context * cann_ctx_dst = (ggml_backend_cann_context *) backend_dst->context;

size_t copy_size = ggml_nbytes(dst);

if (copy_size == 0) {

return true;

}

if (backend_src != backend_dst) {

#ifdef ASCEND_310P

// TODO: Support 310p P2P copy

return false;

#endif

ggml_backend_cann_buffer_context * buf_ctx_src = (ggml_backend_cann_buffer_context *) buf_src->context;

ggml_backend_cann_buffer_context * buf_ctx_dst = (ggml_backend_cann_buffer_context *) buf_dst->context;

GGML_ASSERT(cann_ctx_src->device == buf_ctx_src->device);

GGML_ASSERT(cann_ctx_dst->device == buf_ctx_dst->device);

int32_t canAccessPeer = 0;

ACL_CHECK(aclrtDeviceCanAccessPeer(&canAccessPeer, cann_ctx_src->device, cann_ctx_dst->device));

if (!canAccessPeer) {

return false;

}

// need open both directions for memcpyasync between devices.

ACL_CHECK(aclrtDeviceEnablePeerAccess(cann_ctx_src->device, 0));

ggml_cann_set_device(cann_ctx_src->device);

ACL_CHECK(aclrtDeviceEnablePeerAccess(cann_ctx_dst->device, 0));

// wait for task_queue empty to keep task order.

ACL_CHECK(aclrtMemcpyAsync(dst->data, copy_size, src->data, copy_size, ACL_MEMCPY_DEVICE_TO_DEVICE,

cann_ctx_src->stream()));

// record event on src stream after the copy

// TODO: this event is not effective with acl graph mode, change to use aclrtSynchronizeStream

// if (!cann_ctx_src->copy_event) {

// ACL_CHECK(aclrtCreateEventWithFlag(&cann_ctx_src->copy_event, ACL_EVENT_SYNC));

// }

// ACL_CHECK(aclrtRecordEvent(cann_ctx_src->copy_event, cann_ctx_src->stream()));

// // wait on dst stream for the copy to complete

// ggml_cann_set_device(cann_ctx_dst->device);

// ACL_CHECK(aclrtStreamWaitEvent(cann_ctx_dst->stream(), cann_ctx_src->copy_event));

ACL_CHECK(aclrtSynchronizeStream(cann_ctx_src->stream()));

} else {

// src and dst are on the same backend

ACL_CHECK(aclrtMemcpyAsync(dst->data, copy_size, src->data, copy_size, ACL_MEMCPY_DEVICE_TO_DEVICE,

cann_ctx_dst->stream()));

}

return true;

}

/**

* @brief Synchronizes a CANN backend.

*

* This function synchronizes the specified CANN backend by waiting for all

* operations in its associated stream to complete.

*

* @param backend Pointer to the CANN backend structure to synchronize.

*/

static void ggml_backend_cann_synchronize(ggml_backend_t backend) {

ggml_backend_cann_context * cann_ctx = (ggml_backend_cann_context *) backend->context;

ggml_cann_set_device(cann_ctx->device);

ACL_CHECK(aclrtSynchronizeStream(cann_ctx->stream()));

}

/**

* @brief Check if CANN backend can fuse the specified operation sequence

*

* This function determines whether an operation sequence starting from the specified node

* can be fused into an optimized operation in the CANN backend. Operation fusion can reduce

* memory access overhead and improve computational efficiency.

*

* @param cgraph Pointer to the computation graph

* @param node_idx Index of the starting node in the computation graph

* @param ops Sequence of operation types to check for fusion

* @return true if the operations can be fused

* @return false if the operations cannot be fused

*/

static bool ggml_cann_can_fuse(const struct ggml_cgraph * cgraph,

int node_idx,

std::initializer_list<enum ggml_op> ops) {

if (!ggml_can_fuse(cgraph, node_idx, ops)) {

return false;

}

// CANN backend supports fusing ADD + RMS_NORM operations

if ((ops.size() == 2) && ops.begin()[0] == GGML_OP_ADD && ops.begin()[1] == GGML_OP_RMS_NORM) {

ggml_tensor * add_node = cgraph->nodes[node_idx];

// TODO: support broadcast for ADD + RMS_NORM

if (add_node->src[0]->ne[0] != add_node->src[1]->ne[0] || add_node->src[0]->ne[1] != add_node->src[1]->ne[1] ||

add_node->src[0]->ne[2] != add_node->src[1]->ne[2] || add_node->src[0]->ne[3] != add_node->src[1]->ne[3]) {

return false;

}

return true;

}

return false;

}

/**

* @brief Evaluate the computation graph and optionally capture or execute it using CANN graph API.

*

* If CANN graph execution is enabled and graph capture is required, this function begins

* graph capture, runs the graph, ends capture, and stores the captured graph.

*

* Otherwise, it falls back to op-by-op execution using the CANN compute kernel dispatcher.

*

* @param cann_ctx The CANN backend context.

* @param cgraph The ggml computation graph.

* @param use_cann_graph Whether to use CANN graph execution.

* @param cann_graph_capture_required Whether graph capture is needed due to graph changes.

*/

static void evaluate_and_capture_cann_graph(ggml_backend_cann_context * cann_ctx,

ggml_cgraph * cgraph,

bool use_cann_graph,

bool cann_graph_capture_required) {

#ifdef USE_ACL_GRAPH

if (use_cann_graph && cann_graph_capture_required) { // Begin CANN graph capture

ACL_CHECK(aclmdlRICaptureBegin(cann_ctx->stream(), ACL_MODEL_RI_CAPTURE_MODE_GLOBAL));

}

#endif // USE_ACL_GRAPH

// Only perform the graph execution if CANN graphs are not enabled, or we are capturing the graph.

// With the use of CANN graphs, the execution will be performed by the graph launch.

static bool opt_fusion = parse_bool(get_env_as_lowercase("GGML_CANN_OPERATOR_FUSION").value_or(""));

if (!use_cann_graph || cann_graph_capture_required) {

for (int i = 0; i < cgraph->n_nodes; i++) {

ggml_tensor * node = cgraph->nodes[i];

if (opt_fusion) {

if (ggml_cann_can_fuse(cgraph, i, { GGML_OP_ADD, GGML_OP_RMS_NORM })) {

ggml_cann_op_add_rms_norm_fused(*cann_ctx, node, cgraph->nodes[i + 1]);

i++;

continue;

}

}

if (ggml_is_empty(node) || node->op == GGML_OP_RESHAPE || node->op == GGML_OP_TRANSPOSE ||

node->op == GGML_OP_VIEW || node->op == GGML_OP_PERMUTE || node->op == GGML_OP_NONE) {

continue;

}

if ((node->flags & GGML_TENSOR_FLAG_COMPUTE) == 0) {

continue;

}

bool ok = ggml_cann_compute_forward(*cann_ctx, node);

if (!ok) {

GGML_LOG_ERROR("%s: op not supported %s (%s)\n", __func__, node->name, ggml_op_name(node->op));

}

GGML_ASSERT(ok);

}

}

#ifdef USE_ACL_GRAPH

if (use_cann_graph) {

GGML_ASSERT(!cann_ctx->graph_lru_cache.cache_list.empty());

ggml_cann_graph * matched_graph = cann_ctx->graph_lru_cache.cache_list.front();

if (cann_graph_capture_required) { // End CANN graph capture

ACL_CHECK(aclmdlRICaptureEnd(cann_ctx->stream(), &matched_graph->graph));

}

// Execute CANN graph

ACL_CHECK(aclmdlRIExecuteAsync(matched_graph->graph, cann_ctx->stream()));

}

#endif // USE_ACL_GRAPH

}

/**

* @brief Computes a computational graph using a CANN backend.

*

* This function computes the operations defined in the computational graph

* using the specified CANN backend.

*

* @param backend Pointer to the CANN backend structure to use for computation.

* @param cgraph Pointer to the computational graph structure containing nodes

* representing operations to be computed.